Led by Prof. Zhiqiang Zheng, our NuBot team was founded in 2004. Currently we have two full professors (Prof. Zhiqiang Zheng and Prof. Hui Zhang), one associate professor (Prof. Huimin Lu), one assistant professor (Dr. Junhao Xiao), and several graduate students. Till now, 8 team members have obtained their doctoral degree with the research on RoboCup Middle Size League (MSL), and more than 20 have obtained their master degrees. For more detail of each member please see NuBoters.

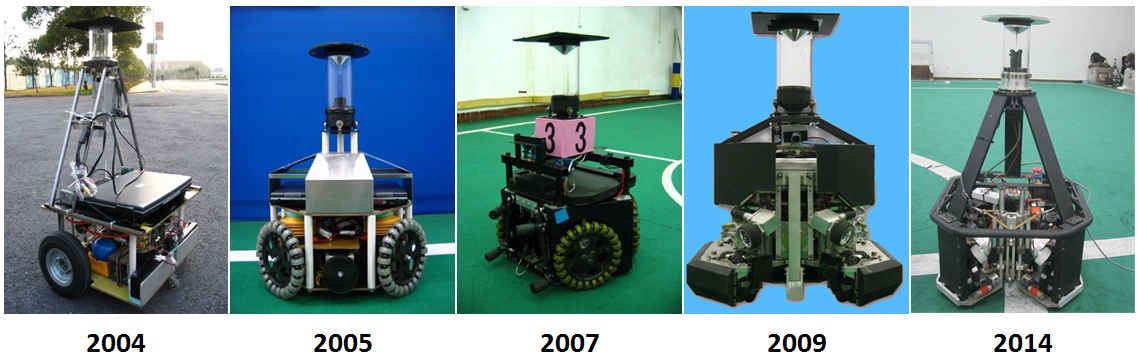

As shown in the figure below, five generations of robots have been created since 2004. We participated in RoboCup Simulation and Small Size League (SSL) initially. Since 2006, we have been participating in RoboCup MSL actively, e.g., we have been to Bremen, Germany (2006), Atlanta, USA (2007), Suzhou, China (2008), Graz, Austria (2009), Singapore (2010), Eindhoven, Netherlands (2013), Joao Pessoa, Brazil (2014), Hefei, China (2015), Leipzig Germany (2016), Nagoya Japan(2017), Montréal Canada(2018) and Sydney Australia(2019) . We have also been participating in RoboCup China Open since it was launched in 2006.

The NuBot robots have been employed not only for RoboCup, but also for other research as an ideal test bed more than robot soccer. As a result, we have published more than 70 journal papers and conference papers. For more detail please see the publication list. Our current research mainly focuses on multi-robot coordination, robust robot vision and formation control.

The following items are our team description papers (TDPs) which illustrates our research progress over the past years.

The team description paper can be downloaded at here, with the main contribution of a Gazebo based simulation system compared to our 2015 TDP.

[1] Xiong, D.,Xiao, J., Lu, H., Zeng, Z., Yu, Q., Huang, K., ... & Zheng, Z. (2015). The design of an intelligent soccer-playing robot. Industrial Robot: An International Journal, 43(1). [PDF]

[2] Yao, W., Dai, W., Xiao, J., Lu, H., & Zheng, Z. (2015). A Simulation System Based on ROS and Gazebo for RoboCup Middle Size League, IEEE Conference on Robotics and Biomimetics, Zhuhai, China. [PDF]

[3] Lu, H., Yu, Q., Xiong, D., Xiao, J., & Zheng, Z. (2015). Object Motion Estimation Based on Hybrid Vision for Soccer Robots in 3D Space. In RoboCup 2014: Robot World Cup XVIII (pp. 454-465). Springer International Publishing. [PDF]

[4] Lu, H., Li, X., Zhang, H., Hu, M., & Zheng, Z. (2013). Robust and real-time self-localization based on omnidirectional vision for soccer robots. Advanced Robotics, 27(10), 799-811. [PDF]

[5] Lu, H., Yang, S., Zhang, H., & Zheng, Z. (2011). A robust omnidirectional vision sensor for soccer robots. Mechatronics, 21(2), 373-389. [PDF]

2rd place in MSL technique challenge in RoboCup 2015, Hefei, China

3rd place in MSL scientific challenge in RoboCup 2015, Hefei, China

6th place in in the MSL of RoboCup 2015, Hefei, China

5th place in RoboCup 2014 João Pessoa Brazil, July 19th~25th

3rd place in MSL of 9th RoboCup China Open, October 10th~12th, Hefei, China

3rd place in both MSL technique challenge of RoboCup ChinaOpen, October 10th~12th, Hefei, China

Entering into the top 8 teams in the MSL of RoboCup 2013 Eindhoven

Champion in the MSL technical challenge 1 of 8th RoboCup China Open, Dec 18th~20th, HeFei, China

The qualification video for RoboCup 2016 Leipzeig, Germany should be shown below. If it does not appear, it can be found at our youku channel (recommended for users in China) or our youtube channel (recommended for users out of China).

NuBot Team Mechanical and Electrical Description together with a Software Flow Chart can be downloaded here.

Junhao Xiao, one member of NuBot team, he is served to MSL community as a member of TC of RoboCup 2016 Leipeig, Germany; and has served to MSL community as a member of TC and OC of RoboCup 2015 Hefei, China, and he has also been appointed as the local chair of RoboCup 2015 MSL.

Huimin Lu, one member of NuBot team, served to MSL community as member of TC and OC of RoboCup 2008 Suzhou , and He was also appointed as the local chair of RoboCup 2008 MSL. He was a member of TC of RoboCup 2011 Istanbul.

Crowd navigation has becoming an increasingly prominent problem in robotics. The main challenge comes from the lack of understanding of pedestrians’ behaviors. Encouraged by the great achievement in trajectory prediction, the twin field of crowd navigation, this work focus on integrating trajectory prediction with path planning and proposed a crowd navigation algorithm named RHC-T (Receding Horizon Control with Trajjectron++). It consists of two independent modules: one for trajectory prediction and another for receding horizon control. Benefiting from the trajectory prediction module, RHC-T builds up an explicit understanding of pedestrians’behaviors in the form of predicted trajectories. Base on the formulation of receding horizon control, the proposed algorithm can deal with the time-varying obstacle constraints from pedestrians, naturally. Furthermore, extensive experiments are performed on two pedestrian trajectory datasets, ETH and UCY, to evaluate the proposed algorithm in a more realistic way than previous works. Experimental results show that RHC-T reduces the intervention to pedestrians significantly and navigates the robot in time-efficient paths. Compared with three baseline algorithms, RHC-T achieves better performance with an improvement in the intervention rate and navigation time of at least 8.00% and 3.88%, respectively.

This video is the accompanying video of the paper: Jiayang Liu, Junhao Xiao, Huimin Lu, Zhiqian Zhou, Sichao Lin, Zhiqiang Zheng. Terrain Assessment Based on Dynamic Voxel Grids in Outdoor Unstructured Environments

Abstract: For ground robots working in outdoor unstructured environments, terrain assessment is a key step for path planning.In this paper, we propose a novel terrain assessment method. The raw 3D point clouds are segmented based on dynamic voxel grids, then the untraversable areas are extracted and stored in the form of 2D occupancy grid maps. Afterwards, only the traversable areas are processed and stored in the form of 2.5D digital elevation maps (DEMs). In this case, the efficiency of the terrain assessment is improved and the query space of terrain feature information is reduced. To evaluate the proposed algorithm, the approach operating on point clouds has served as the baseline. According to the experimental results, our method has a better performance in both assessment time and query efficiency.

The team description paper can be downloaded from here.

[1] Wei Dai, Huimin Lu, Junhao Xiao and Zhiqiang Zheng. Task Allocation without Communication Based on Incomplete Information Game Theory for Multi-robot Systems. Journal of Intelligent & Robotic Systems, 2018. [PDF]

3rd place in MSL scientific challenge in RoboCup 2019, Sydney, Australia

1st place in MSL technique challenge in RoboCup 2019, Sydney, Australia

4th place in MSL of RoboCup 2019, Sydney, Australia

2018

4th place in MSL scientific challenge in RoboCup 2018, Montréal, Canada

3st place in MSL technique challenge in RoboCup 2018, Montréal, Canada

4th place in MSL of RoboCup 2018, Montréal, Canada

2nd place in MSL of RoboCup 2018 ChinaOpen, ShaoXing, China

2nd place in MSL technique challenge of RoboCup 2018 ChinaOpen,ShaoXing, China

3rd place in MSL scientific challenge in RoboCup 2017, Nagoya, Japan

3rd place in MSL technique challenge in RoboCup 2017, Nagoya, Japan

4th place in MSL of RoboCup 2017, Nagoya, Japan

3rd place in MSL of RoboCup 2017 ChinaOpen, RiZhao, China

1st place in MSL scientific challenge of RoboCup 2017 ChinaOpen, RiZhao, China

4. Qualification video

The qualification video for RoboCup 2021 (Virtual) can be found at our youku channel(recommended for users in China) or our YouTube channel (recommended for users out of China).

5. Mechanical and Electrical Description and Software Flow Chart

NuBot Team Mechanical and Electrical Description together with a Software Flow Chart can be downloaded from here.

Zhiqian Zhou, one member of NuBot team, served to MSL community as a member of OC RoboCup 2019 Syndey and TC RoboCup 2020 Bordeaux, France.

We built a dataset for robot detection which contained fully annotated images acquired from MSL competitions. The dataset is publicly available at: https://github.com/Abbyls/robocup-MSL-dataset

7. Declaration regarding mixed team

No!

8. Declaration regarding 802.11b AP

No!

9. MAC address

The list of our team's MAC addresses can be downloaded from here.

The team description paper can be downloaded from here.

[1] Wei Dai, Huimin Lu, Junhao Xiao and Zhiqiang Zheng. Task Allocation without Communication Based on Incomplete Information Game Theory for Multi-robot Systems. Journal of Intelligent & Robotic Systems, 2018. [PDF]

3rd place in MSL scientific challenge in RoboCup 2019, Sydney, Australia

1st place in MSL technique challenge in RoboCup 2019, Sydney, Australia

4th place in MSL of RoboCup 2019, Sydney, Australia

2018

4th place in MSL scientific challenge in RoboCup 2018, Montréal, Canada

3st place in MSL technique challenge in RoboCup 2018, Montréal, Canada

4th place in MSL of RoboCup 2018, Montréal, Canada

2nd place in MSL of RoboCup 2018 ChinaOpen, ShaoXing, China

2nd place in MSL technique challenge of RoboCup 2018 ChinaOpen,ShaoXing, China

3rd place in MSL scientific challenge in RoboCup 2017, Nagoya, Japan

3rd place in MSL technique challenge in RoboCup 2017, Nagoya, Japan

4th place in MSL of RoboCup 2017, Nagoya, Japan

3rd place in MSL of RoboCup 2017 ChinaOpen, RiZhao, China

1st place in MSL scientific challenge of RoboCup 2017 ChinaOpen, RiZhao, China

4. Qualification video

The qualification video for RoboCup 2021 Bordeaux, France can be found at our youku channel(recommended for users in China) or our YouTube channel (recommended for users out of China).

5. Mechanical and Electrical Description and Software Flow Chart

NuBot Team Mechanical and Electrical Description together with a Software Flow Chart can be downloaded from here.

Zhiqian Zhou, one member of NuBot team, served to MSL community as a member of OC RoboCup 2019 Syndey and TC RoboCup 2020 Bordeaux, France.

We built a dataset for robot detection which contained fully annotated images acquired from MSL competitions. The dataset is publicly available at: https://github.com/Abbyls/robocup-MSL-dataset

7. Declaration regarding mixed team

No!

8. Declaration regarding 802.11b AP

No!

9. MAC address

The list of our team's MAC addresses can be downloaded from here.

The team description paper can be downloaded from here.

[1] Wei Dai, Huimin Lu, Junhao Xiao and Zhiqiang Zheng. Task Allocation without Communication Based on Incomplete Information Game Theory for Multi-robot Systems. Journal of Intelligent & Robotic Systems, 2018. [PDF]

3rd place in MSL scientific challenge in RoboCup 2019, Sydney, Australia

1st place in MSL technique challenge in RoboCup 2019, Sydney, Australia

4th place in MSL of RoboCup 2019, Sydney, Australia

2018

4th place in MSL scientific challenge in RoboCup 2018, Montréal, Canada

3st place in MSL technique challenge in RoboCup 2018, Montréal, Canada

4th place in MSL of RoboCup 2018, Montréal, Canada

2nd place in MSL of RoboCup 2018 ChinaOpen, ShaoXing, China

2nd place in MSL technique challenge of RoboCup 2018 ChinaOpen,ShaoXing, China

3rd place in MSL scientific challenge in RoboCup 2017, Nagoya, Japan

3rd place in MSL technique challenge in RoboCup 2017, Nagoya, Japan

4th place in MSL of RoboCup 2017, Nagoya, Japan

3rd place in MSL of RoboCup 2017 ChinaOpen, RiZhao, China

1st place in MSL scientific challenge of RoboCup 2017 ChinaOpen, RiZhao, China

4. Qualification video

The qualification video for RoboCup 2020 Bordeaux, France can be found at our youku channel(recommended for users in China) or our YouTube channel (recommended for users out of China).

5. Mechanical and Electrical Description and Software Flow Chart

NuBot Team Mechanical and Electrical Description together with a Software Flow Chart can be downloaded from here.

Zhiqian Zhou, one member of NuBot team, served to MSL community as a member of OC RoboCup 2019 Syndey and TC RoboCup 2020 Bordeaux, France.

We built a dataset for robot detection which contained fully annotated images acquired from MSL competitions. The dataset is publicly available at: https://github.com/Abbyls/robocup-MSL-dataset

7. Declaration regarding mixed team

No!

8. Declaration regarding 802.11b AP

No!

9. MAC address

The list of our team's MAC addresses can be downloaded from here.

|

|

name |

|

Founder and director |

Prof. Dr. Zhiqiang Zheng |

|

Staff |

Prof. Dr. Hui Zhang Associate Prof. Dr. Huimin Lu Associate Prof. Dr. Junhao Xiao Dr. Zhiwen Zeng Dr. Ming Xu Dr. Qinghua Yu Dr. Kaihong Huang |

|

Graduate students |

Xiaoxiang Zheng Wei Dai Weijia Yao Xieyuanli Chen Bailiang Chen Bingxin Han (female) Xiao Li Zhiqian Zhou Shanshan Zhu (female) Chenghao Shi Zirui Guo Pengming Zhu Zhengyu Zhong Yang Zhao Chuang Cheng Wenbang Deng Che Guo Haoran Ren Daoxun Zhang Junqi Zhang Yao Li Zhiwen Zhang |

|

Alumni |

Dr. Lin Liu Dr. Fei Liu Dr. Xiucai Ji

Prof. Dr. Wenjie Shu

Dr. Dan Hai

Associate Prof. Dr. Xiangke Wang

Dr. Shaowu Yang

Dr. Lina Geng (female) Dr. Shuai Tang

Dr. Jie Liang Dr. Xiabin Dong Dr. Dan Xiong Mrs. Wei Liu

Mr. Yupeng Liu Mr. Dachuan Wang Mr. Baifeng Yu

Mr. Fangyi Sun

Mr. Lianhu Cui Mr. Shengcai Lu Mr. Peng Dong

Mr. Yubo Li Mr. Xiaozhou Zhu Mr. Qingzhu Cui

Mr. Xingrui Yang Mr. Kaihong Huang Mr. Shuai Cheng Mr. Xiaoxiang Zheng Mr. Yunlei Chen Mr. Xianglin Yang Mr. Yu Zhang Mrs. Yaoyao Lan Mr. Yuxi Huang Mr. Yi Liu

Mr. Yuhua Zhong Mr. Qiu Cheng

Mr. Junkai Ren Mr. Peng Chen Mrs. Minjun Xiong Mr. Pan Wang Mrs. Sha Luo Mr. Runze Wang Mr. Junchong Ma Mrs. Ruoyi Yan Mr. Yi Li Mr. Qihang Qiu Mr. Shaozun Hong |